Thanks to this detailed output, we now also now the original IP address ("ipAddress": "10.10.204.124") and the host name ("requestedHostname": "xyz-node1").

"transitioningMessage": "waiting to register with Kubernetes", "message": "waiting to register with Kubernetes", $ curl -s -u token-xxxxx:secret | jq -r '.data | select(.clusterId = "c-zs42v")' Even though the node's name is shown as "null", we can still query the API and use jq to filter the json output for a specific cluster: The check_rancher2 monitoring plugin reads the node information from the Rancher 2 API (accessible under the /v3 path). Node lookup and deletion via Rancher 2 API We know the reason why - but we still need to clean this up. As the cluster disappeared, the user thought all is good and went on to create another cluster (this time successfully).īut - as our monitoring shows - something was still happening in the background. The user then deleted the cluster in the Rancher 2 user interface. This led to a cluster in failed state, unable to actually deploy Kubernetes. It turned out that this person tried to create a new cluster in Rancher 2 but forgot to create required security groups (firewall rules). With this information we now have an exact timestamp (creationTimestamp) and the user id (creatorId).Īfter the user ID could be matched to another cluster administrator, we asked this user what happened on that day. $ kubectl get -namespace c-zs42v -o json By looking at the cluster registration tokens of this namespace, we can find out which user launched this operation: Our missing cluster!Īs we know from check_rancher2, there is a node stuck (trying) register in this cluster. In this particular situation we focus on the namespaces, as each cluster created by Rancher 2 (RKE) also creates a namespace in Kubernetes:Ĭluster-fleet-default-c-6p529-0a63de8fc176 Active 17mĬluster-fleet-default-c-dzfvn-db0ece01cc3b Active 17mĬluster-fleet-default-c-gsczw-b67c2a857200 Active 17mĬluster-fleet-default-c-hmgcp-684fbe9142cb Active 17mĬluster-fleet-default-c-pls9j-b8ab525e0c29 Active 17mĬluster-fleet-default-c-s2c8b-4e26ad7ae3c1 Active 17mĬluster-fleet-default-c-xjvzp-0b65f14fef6c Active 17mĬluster-fleet-default-c-zhsdr-955f3b1ac907 Active 17mĬluster-fleet-local-local-1a3d67d0a899 Active 17mĪll the known cluster IDs (seen before with the -t info check of check_rancher2) show up. The important part: There is no such cluster with the ID c-zs42v!īy running kubectl against the Rancher 2 (local) cluster, additional information from the Kubernetes API can be retrieved. check_rancher2.sh -H -U token-xxxxx -P "secret" -S -t infoĬHECK_RANCHER2 OK - Found 9 clusters: c-6p529 alias me-prod - c-dzfvn alias prod-ext - c-gsczw alias aws-prod - c-hmgcp alias prod-int - c-pls9j alias vamp - c-s2c8b alias gamma - c-xjvzp alias et-prod - c-zhsdr alias azure-prod - local alias local - and 25 projects: |'clusters'=9 'projects'=25 By using the -t info check type, all Kubernetes clusters (managed by this Rancher 2 setup) can be listed: Why is the node's name set to "null" instead of a real host name? Why is this particular node stuck in "registering" phase? And why does this not show up in the Rancher 2 user interface?Īt least the cluster name is shown by check_rancher2, so we have an additional hint to follow.

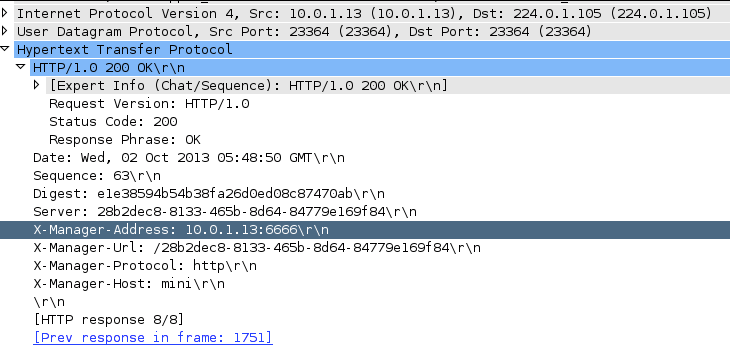

There are a couple of eyebrows which went up when this alert appeared. check_rancher2.sh -H -U token-xxxxx -P "secret" -S -t nodeĬHECK_RANCHER2 CRITICAL - null in cluster c-zs42v is registering -|'nodes_total'=67 'node_errors'=1 'node_ignored'=0

On the command line, the output looks like this:

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed